1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

168

169

170

171

172

173

174

175

176

177

178

179

180

181

182

183

184

185

186

187

188

189

190

191

192

193

194

195

196

197

198

199

200

201

202

203

204

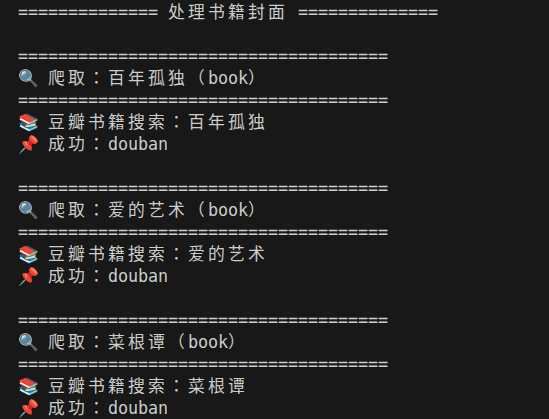

| #!/bin/bash

shopt -s nullglob

set -u

BOOK_IMG_DIR="./img/book_pic"

MOVIE_IMG_DIR="./img/movie_pic"

BOOK_SOURCE_FILE="../../../source/_data/books.yml"

MOVIE_SOURCE_FILE="../../../source/_data/movies.yml"

mkdir -p "$BOOK_IMG_DIR" "$MOVIE_IMG_DIR"

USER_AGENT="Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/128.0.0.0 Safari/537.36 Edg/128.0.0.0"

DELAY=3

IMG_TIMEOUT=20

MIN_IMG_SIZE=5120

urlencode() {

python3 - <<EOF

import urllib.parse

print(urllib.parse.quote("""$1"""))

EOF

}

validate_image() {

local FILE="$1"

[ ! -f "$FILE" ] && return 1

local SIZE

SIZE=$(stat -c%s "$FILE" 2>/dev/null || echo 0)

[ "$SIZE" -lt "$MIN_IMG_SIZE" ] && return 1

file "$FILE" | grep -qi image || return 1

return 0

}

# ===================== 豆瓣 =====================

fetch_douban() {

local NAME="$1"

local TYPE="$2"

local QUERY SEARCH_URL SUBJECT_ID DETAIL_URL

if [ "$TYPE" = "movie" ]; then

QUERY=$(urlencode "$NAME")

SEARCH_URL="https://movie.douban.com/subject_search?search_text=${QUERY}"

echo "🎬 豆瓣电影搜索:$NAME" >&2

else

QUERY=$(urlencode "$NAME")

SEARCH_URL="https://book.douban.com/subject_search?search_text=${QUERY}"

echo "📚 豆瓣书籍搜索:$NAME" >&2

fi

SUBJECT_ID=$(wget -qO- --user-agent="$USER_AGENT" \

--header="Accept: text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,*/*;q=0.8" \

--header="Accept-Language: zh-CN,zh;q=0.9,en;q=0.8" \

--header="Referer: https://www.douban.com/" \

--header="Connection: keep-alive" \

--header="Upgrade-Insecure-Requests: 1" \

--tries=2 "$SEARCH_URL" \

| grep -o '/subject/[0-9]\+' \

| head -n 1 \

| grep -o '[0-9]\+')

if [ -z "$SUBJECT_ID" ] && [ "$TYPE" = "book" ]; then

SEARCH_URL="https://book.douban.com/subject_search?search_text=${QUERY}&cat=1001"

SUBJECT_ID=$(wget -qO- --user-agent="$USER_AGENT" \

--header="Accept: text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,*/*;q=0.8" \

--header="Accept-Language: zh-CN,zh;q=0.9,en;q=0.8" \

--header="Referer: https://www.douban.com/" \

--header="Connection: keep-alive" \

--header="Upgrade-Insecure-Requests: 1" \

--tries=2 "$SEARCH_URL" \

| grep -o '/subject/[0-9]\+' \

| head -n 1 \

| grep -o '[0-9]\+')

fi

[ -z "$SUBJECT_ID" ] && return 1

DETAIL_URL="https://${TYPE}.douban.com/subject/${SUBJECT_ID}/"

wget -qO- --user-agent="$USER_AGENT" \

--header="Accept: text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,*/*;q=0.8" \

--header="Accept-Language: zh-CN,zh;q=0.9,en;q=0.8" \

--header="Referer: https://www.douban.com/" \

--header="Connection: keep-alive" \

--header="Upgrade-Insecure-Requests: 1" \

--tries=2 "$DETAIL_URL" \

| grep -o 'https://img[0-9]\.doubanio\.com/view/subject/s/public/[^"]\+\.jpg' \

| head -n 1 \

| sed 's/_\(s\|m\|l\)\.jpg/.jpg/'

}

# ===================== Amazon =====================

fetch_amazon() {

local NAME="$1"

local QUERY=$(urlencode "$NAME book")

echo "🅰️ Amazon 搜索:$NAME" >&2

wget -qO- --user-agent="$USER_AGENT" \

--header="Accept: text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,*/*;q=0.8" \

--header="Accept-Language: zh-CN,zh;q=0.9,en;q=0.8" \

--header="Referer: https://www.amazon.com/" \

--header="Connection: keep-alive" \

--tries=2 "https://www.amazon.com/s?k=${QUERY}" \

| grep -o 'https://m.media-amazon.com/images/I/[^"]\+\.jpg' \

| grep -v '._SX' \

| head -n 1

}

# ===================== Wikipedia =====================

fetch_wikipedia() {

local NAME="$1"

local QUERY=$(urlencode "$NAME")

echo "🌍 Wikipedia:$NAME" >&2

wget -qO- --user-agent="$USER_AGENT" \

--header="Accept: text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,*/*;q=0.8" \

--header="Accept-Language: zh-CN,zh;q=0.9,en;q=0.8" \

--header="Referer: https://zh.wikipedia.org/" \

--header="Connection: keep-alive" \

--tries=2 "https://zh.wikipedia.org/wiki/${QUERY}" \

| grep -o 'https://upload.wikimedia.org/[^"]\+\.jpg' \

| head -n 1

}

# ===================== Google =====================

fetch_google() {

local NAME="$1"

local QUERY=$(urlencode "$NAME 官方 海报")

echo "🌐 Google 图片:$NAME" >&2

wget -qO- --user-agent="$USER_AGENT" \

--header="Accept: text/html,application/xhtml+xml,application/xml;q=0.9,image/avif,image/webp,*/*;q=0.8" \

--header="Accept-Language: zh-CN,zh;q=0.9,en;q=0.8" \

--header="Referer: https://www.google.com.hk/" \

--header="Connection: keep-alive" \

--tries=2 "https://www.google.com.hk/search?q=${QUERY}&tbm=isch&hl=zh-CN" \

| grep -o 'https://[^"]\+\.jpg' \

| grep -v 'gstatic\|googleusercontent' \

| head -n 1

}

download_cover() {

local NAME="$1"

local DIR="$2"

local TYPE="$3"

local SAFE_NAME

SAFE_NAME=$(echo "$NAME" | sed 's/[\/:*?"<>|()]/_/g')

local SAVE_PATH="${DIR}/${SAFE_NAME}.jpg"

[ -f "$SAVE_PATH" ] && validate_image "$SAVE_PATH" && {

echo "✅ 已存在:$NAME"

return 0

}

echo -e "\n====================================="

echo "🔍 爬取:$NAME($TYPE)"

echo "====================================="

sleep $DELAY

local IMG_URL=""

for SOURCE in douban amazon wikipedia google; do

case "$SOURCE" in

douban) IMG_URL=$(fetch_douban "$NAME" "$TYPE") ;;

amazon) [ "$TYPE" = "book" ] && IMG_URL=$(fetch_amazon "$NAME") ;;

wikipedia) IMG_URL=$(fetch_wikipedia "$NAME") ;;

google) IMG_URL=$(fetch_google "$NAME") ;;

esac

[ -z "$IMG_URL" ] && continue

wget -q --user-agent="$USER_AGENT" \

--header="Accept: image/avif,image/webp,*/*" \

--header="Referer: https://www.douban.com/" \

--timeout=$IMG_TIMEOUT \

--tries=2 "$IMG_URL" -O "$SAVE_PATH"

if validate_image "$SAVE_PATH"; then

echo "📌 成功:$SOURCE"

return 0

else

rm -f "$SAVE_PATH"

echo "⚠️ $SOURCE 返回无效图片,切换下一个"

fi

done

echo "❌ 所有来源失败:$NAME"

return 1

}

echo "============== 处理书籍封面 =============="

[ -f "$BOOK_SOURCE_FILE" ] && grep 'title:' "$BOOK_SOURCE_FILE" | sed 's/^- *title: //' | while read -r title; do

[ -n "$title" ] && download_cover "$title" "$BOOK_IMG_DIR" "book" || true

done

echo -e "\n============== 处理电影封面 =============="

[ -f "$MOVIE_SOURCE_FILE" ] && grep 'title:' "$MOVIE_SOURCE_FILE" | sed 's/^- *title: //' | while read -r title; do

[ -n "$title" ] && download_cover "$title" "$MOVIE_IMG_DIR" "movie" || true

done

echo -e "\n🎉 所有任务处理完成!"

|